When I started, our system was a big, fat monolith. A real sluggish behemoth that could only be moved with great effort. Sure, some brave souls had already tried to extract a few parts, but no one had really followed through.

Brave New World – Or Not? Link to heading

Half a year after I started, the idea came up to outsource large parts of development to India. And by “large parts,” they actually meant the entire architecture. Spoiler: That went horribly wrong.

As a Plan B, I was then given the task of extracting two major components from the application. To keep things “simple,” we decided to split the existing layered architecture horizontally. In hindsight, a rather dumb idea—but hey, you learn as you go.

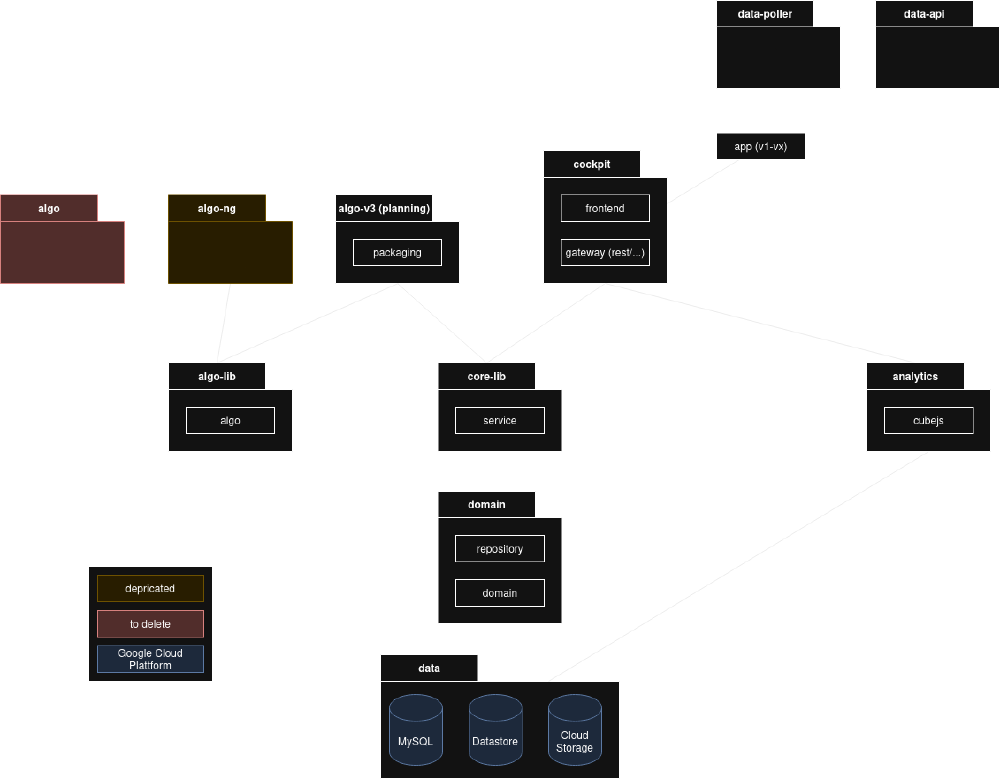

This is what it looked like: Link to heading

Cockpit:

- Service

- Web (Frontend)

- Web & App (Gateway)

- Security

- Error Handling

- Config (Security & Web)

Algo-v3:

- Service

- “Microservice” for all planning tasks

- Simulation

Core-lib:

- Java Library

- Services Layer

- Default Config

- External Interfaces (Twilio, Stream, GCP, etc.)

- Utils/Helper

Domain:

- Java Library

- Mapping of a model to a data source

- Data queries

- Enforcing rules (string length, required fields, default values, etc.)

Scalability & Resilience Link to heading

One of the main reasons for the split was, of course, scalability. Simply throwing more RAM and CPU at it wasn’t the most elegant solution. So: horizontal scaling!

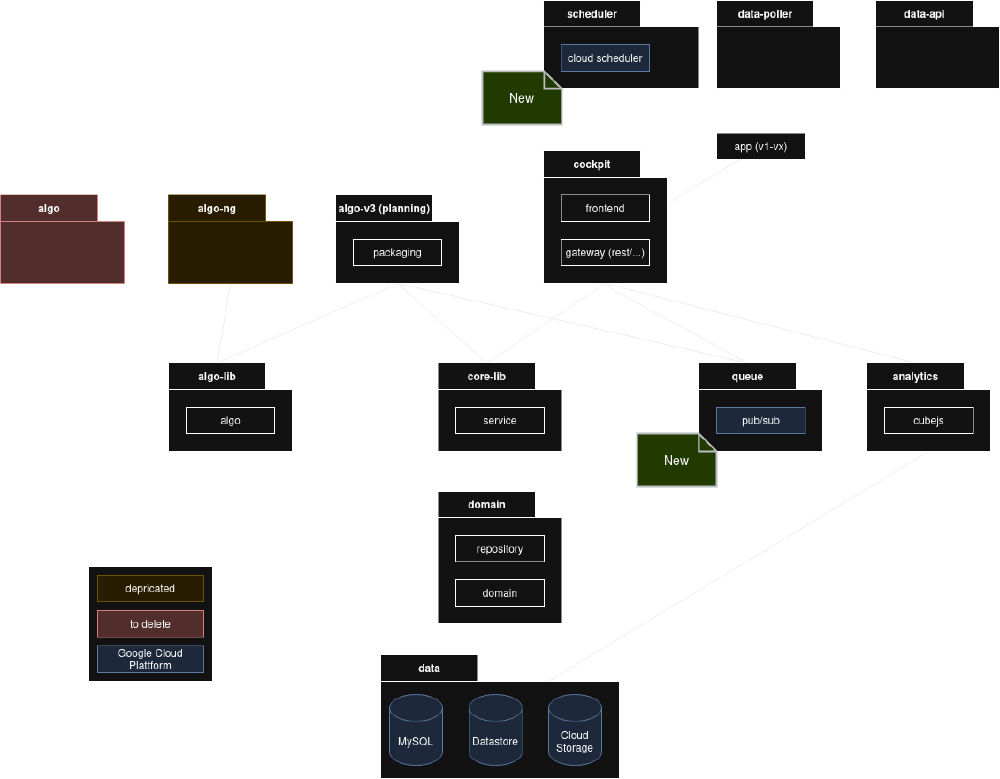

Problem: The application had internal scheduling. Meaning: More instances didn’t distribute the load but just created more chaos.

Solution: Move scheduling out and put it into the GCP Cloud Scheduler. Now the application could run multiple times, but all the load still ended up on a single instance—bad luck. Next step: Queue up scheduled jobs per tenant in GCP PubSub and let instances subscribe to them. Bam, load distributed.

Nice job! Simple, pragmatic, and it works.

Microservices? Link to heading

Yeah, we now have a microservices architecture! Or do we? Well, soon we added a few more services—one for notifications, one for IoT data… And what happened? Microservices explosion!

- Hard to manage for our small team

- A jungle of services that no one could keep track of

Microservices 2.0 – We Need Rules Link to heading

So: When should we split a service?

General reasons: Link to heading

- Technological heterogeneity

- Resilience

- Scalability

- Easier deployments

- Organizational alignment

- Replaceability

Our reasons: Link to heading

- Scalability: Large services are hard to manage and scale. Smaller services can be optimized more precisely.

- Resilience: Critical services must be robust and fail-safe.

- Maintainability: Smaller services are easier to maintain and develop further.

Domain-Driven Design (DDD) – Structure with a Purpose Link to heading

We want clean code with clear boundaries. But not everything needs to be its own service. So: DDD!

A service should have well-defined content, and that content should be properly encapsulated.

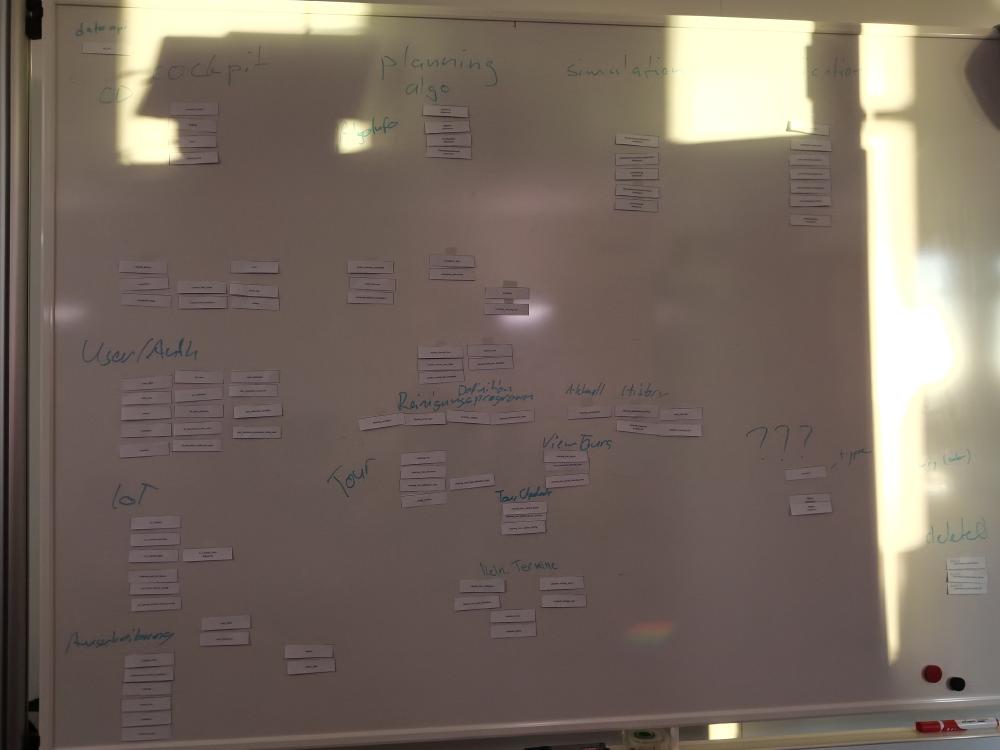

So: Brainstorming! What domains do we have? How do they fit together? Where do we draw the boundaries?

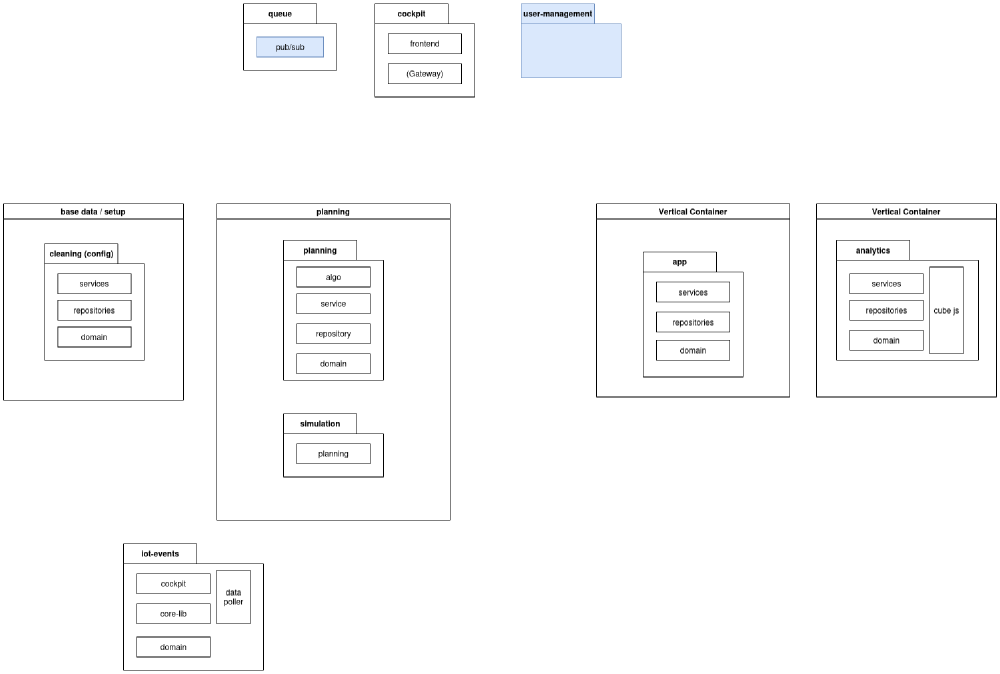

Ideal Architecture Link to heading

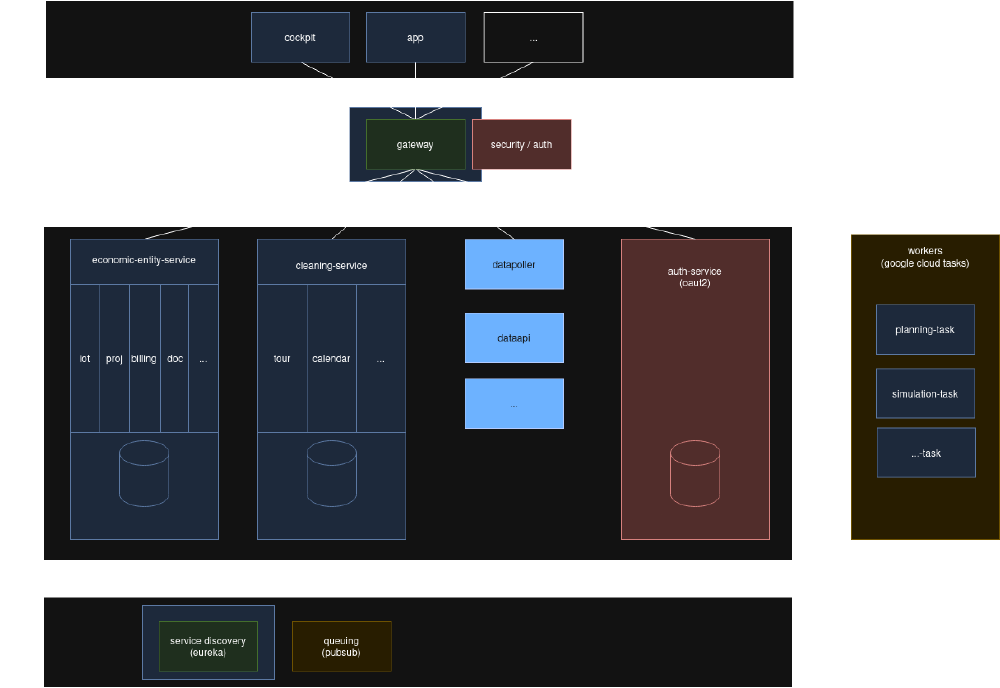

From this process, a new architecture emerged with two main services:

- EconomicEntityService: Covers topics like IoT, billing, documents, and other general domains.

- CleaningService: Includes tours, calendars, area types, and everything cleaning-related.

Additionally, there are a few applications that access these services through the gateway, an authentication service, a few smaller helper services, and the planning and simulation services, which should run as workers.

Implementation – Theory vs. Practice Link to heading

Drawing nice diagrams was easy—implementation was not.

As soon as we started moving the first domains, it became clear: The code was a mess.

- Domain Layer & Service Layer? Tightly intertwined.

- Entities? Deeply nested in each other.

So: Lots of work, lots of frustration—and a sobering result.

Then the team was downsized (India was out again). And the whole topic? Put on ice…